Three months into 2026, the AI landscape looks radically different from even a year ago. OpenAI’s GPT-5.4, Anthropic’s Claude 4.6 Opus, and Google’s Gemini 3.1 Pro have each pushed the boundaries of what large language models can do — and choosing between them has never been harder. Context windows have ballooned past one million tokens. Reasoning benchmarks that seemed impossible in 2025 are now routine. And pricing models have shifted enough that the “best” choice depends heavily on what you need the AI to do.

We spent weeks running these models through real-world tasks — writing, coding, research, image analysis, and complex reasoning — so you don’t have to guess. This is the definitive breakdown of how GPT-5.4, Claude 4.6 Opus, and Gemini 3.1 Pro stack up in 2026. If you’re new to AI assistants, our Beginner’s Guide to AI in 2026 is a great place to start before diving in.

Quick Overview: What’s New in Each Model

Before we get into the head-to-head comparisons, here’s a rapid summary of what each company brought to the table with their latest flagship releases.

GPT-5.4 (OpenAI) — Released March 5, 2026

OpenAI took a modular approach with GPT-5.4, splitting it into three distinct variants: Standard, Thinking, and Pro. Standard is the everyday workhorse — fast, affordable, and capable. Thinking adds an explicit chain-of-thought reasoning layer for complex problems. Pro is the no-compromise option with maximum context (1.05 million tokens) and the highest benchmark scores. This tiered strategy lets users (and developers) pick exactly the capability-cost tradeoff they need rather than paying for overkill on simple tasks.

Claude 4.6 Opus (Anthropic) — The Developer Favorite

Anthropic’s Claude 4.6 Opus cemented its reputation as the most-loved developer tool in recent industry surveys, and for good reason. With a 1 million token context window, exceptional code generation and understanding, and Anthropic’s continued emphasis on safety and helpfulness, Claude 4.6 Opus excels at tasks requiring nuance — long document analysis, careful instruction-following, and extended coding sessions where context retention matters.

Gemini 3.1 Pro (Google) — The Integration Powerhouse

Google’s Gemini 3.1 Pro made headlines by scoring 77.1% on ARC-AGI-2, a benchmark designed to test genuine reasoning and abstraction. But the real story for most users is its deep integration with Google Workspace — Docs, Sheets, Gmail, Calendar, and more. Gemini 3.1 Pro is natively multimodal, handling text, images, audio, and video in a single model architecture, and its generous free tier through Google products makes it the most accessible of the three.

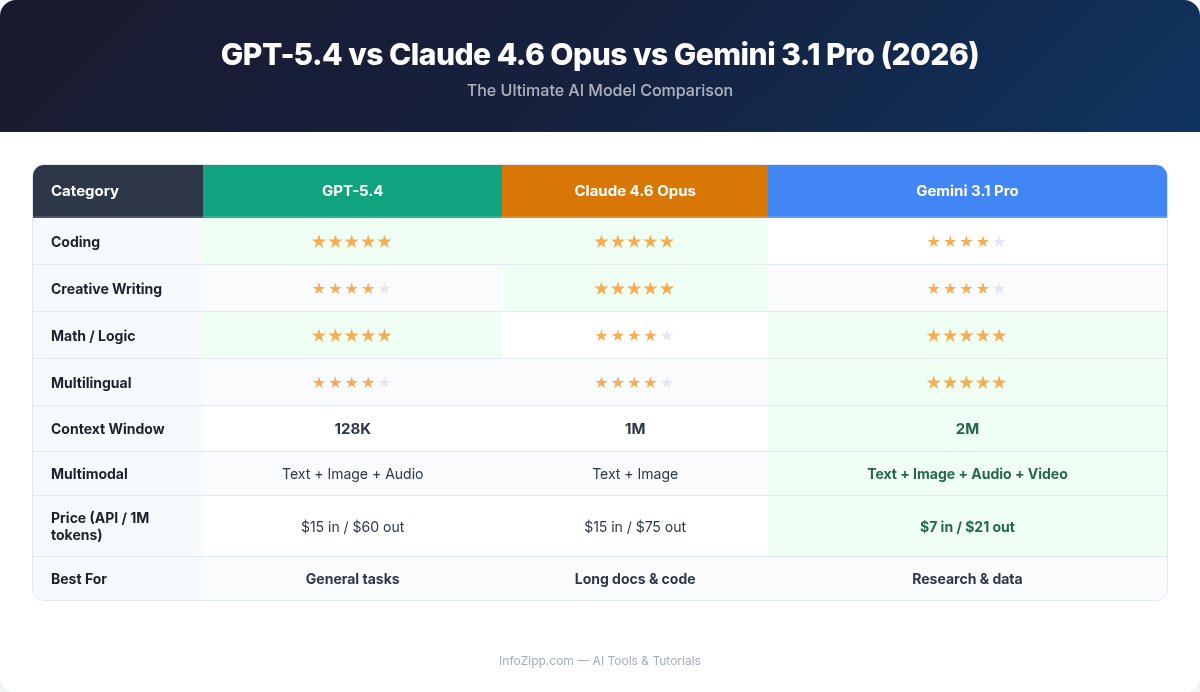

Head-to-Head Comparison Table

Numbers tell a story that paragraphs sometimes can’t. Here’s how the three models compare across key specifications and capabilities.

| Feature | GPT-5.4 (Standard / Thinking / Pro) | Claude 4.6 Opus | Gemini 3.1 Pro |

|---|---|---|---|

| Max Context Window | 200K / 512K / 1.05M tokens | 1M tokens | 1M tokens |

| API Input Pricing | $2 / $5 / $15 per 1M tokens | $15 per 1M tokens | $7 per 1M tokens |

| API Output Pricing | $8 / $20 / $60 per 1M tokens | $75 per 1M tokens | $21 per 1M tokens |

| Chat Subscription | $20/mo (Plus) / $200/mo (Pro) | $20/mo (Pro) / $100/mo (Max) / $200/mo (Team) | Free tier / $20/mo (Advanced) |

| Multimodal Input | Text, images, audio, video, files | Text, images, files, code | Text, images, audio, video, files |

| Image Generation | Yes (native DALL-E integration) | No | Yes (Imagen 3) |

| Web Search | Yes (built-in browsing) | Yes (web search tool) | Yes (Google Search grounding) |

| ARC-AGI-2 Score | ~68% (Pro variant) | ~72% | 77.1% |

| Best For | Versatility, ecosystem, multimodal | Coding, long-context, precision | Reasoning, Google integration, value |

Writing Quality: Clarity, Tone, and Nuance

All three models produce excellent writing in 2026, but their styles and strengths differ in ways that matter depending on your use case.

GPT-5.4 remains the most versatile writer. It adapts tone seamlessly — give it a brand voice guide and it nails it. Marketing copy, blog posts, emails, creative fiction: GPT-5.4 Standard handles all of these capably, and the Thinking variant adds a noticeable lift in argumentative writing and structured analysis. The downside is a lingering tendency toward slightly generic phrasing if you don’t steer it carefully with system prompts.

Claude 4.6 Opus has a distinctive writing character: precise, thoughtful, and naturally well-structured. It excels at long-form content where maintaining coherence across thousands of words matters. Technical writing, policy documents, and detailed analyses come out polished without extensive prompting. Claude also follows complex formatting instructions more reliably than the competition — a small thing that saves enormous editing time in production workflows.

Gemini 3.1 Pro writes well but tends toward a slightly more conversational, Google-assistant-like tone by default. Where it shines is research-informed writing: thanks to its Google Search grounding, it pulls in current facts and citations naturally. For content that needs to be both well-written and factually grounded — news summaries, market reports, academic overviews — Gemini has an edge.

Verdict: Claude 4.6 Opus for long-form precision and instruction-following. GPT-5.4 for versatile tone matching. Gemini 3.1 Pro for research-backed writing with current data.

Coding and Development: From Quick Scripts to Large Codebases

This is where the competition gets fierce. All three models have dramatically improved their coding abilities, but the gaps between them are meaningful for professional developers.

Claude 4.6 Opus is the standout for coding in 2026. It’s not an accident that it’s been rated the #1 most-loved developer tool — the combination of a 1 million token context window (meaning it can digest an entire medium-sized codebase at once), excellent code comprehension, and careful attention to edge cases makes it the go-to for serious development work. Claude Code, Anthropic’s CLI tool, has become a standard part of many engineering workflows. For refactoring, debugging, and understanding unfamiliar codebases, Claude 4.6 Opus is hard to beat.

GPT-5.4 Thinking is the strongest OpenAI variant for coding. Its explicit reasoning layer helps it plan multi-step implementations and catch logical errors that Standard misses. GPT-5.4 Pro pushes further still, handling the most complex algorithmic challenges. The broader OpenAI ecosystem — including deep integrations with VS Code via GitHub Copilot — remains a major advantage for developers already in that workflow. (For a deeper dive into AI coding tools, see our Claude Code vs Cursor vs Copilot comparison.)

Gemini 3.1 Pro is competitive and improving rapidly. Its strength in code is closely tied to its reasoning capabilities — the model is excellent at understanding the intent behind code and suggesting architecturally sound solutions. Google’s integration with Android Studio and Firebase also makes it the natural choice for mobile developers in the Google ecosystem.

Verdict: Claude 4.6 Opus for most development work, especially long-context tasks. GPT-5.4 Thinking/Pro for ecosystem integration and algorithmic challenges. Gemini 3.1 Pro for Google-stack development.

Research and Analysis: Making Sense of Complex Information

The ability to ingest large documents, synthesize information from multiple sources, and deliver clear analysis is one of the most practically valuable AI capabilities. Here’s how each model performs.

Context window size matters enormously for research tasks. Claude 4.6 Opus (1M tokens), Gemini 3.1 Pro (1M tokens), and GPT-5.4 Pro (1.05M tokens) all offer massive context — enough to process entire books or hundreds of pages of research papers in a single session. GPT-5.4 Standard’s 200K limit, while substantial, can feel restrictive for heavy research workflows.

Claude 4.6 Opus handles long-document analysis with exceptional fidelity. Feed it a 300-page report and ask about a detail on page 247 — it finds it reliably. The model’s careful, precise nature means it’s less likely to hallucinate facts or misattribute claims from source material. For academic research, legal document review, and financial analysis, this reliability is critical.

GPT-5.4 Pro slightly edges ahead on sheer context capacity at 1.05M tokens and brings strong analytical reasoning. The Thinking variant is particularly useful for multi-step analysis — it “shows its work” in a way that makes it easier to verify conclusions. OpenAI’s browsing capabilities also make GPT-5.4 effective for real-time research that combines uploaded documents with web sources.

Gemini 3.1 Pro has a unique advantage: Google Search grounding is built into its DNA. For research tasks that require pulling in current information — market data, recent publications, news events — Gemini’s integration with Google’s search index is unmatched. It also handles multimodal research well, analyzing charts, graphs, and images alongside text. For a specialized research alternative, our Perplexity AI research guide is also worth reading.

Verdict: Claude 4.6 Opus for precision and long-document fidelity. Gemini 3.1 Pro for web-grounded and multimodal research. GPT-5.4 Pro for combining large context with structured reasoning.

Multimodal Capabilities: Beyond Text

The AI models of 2026 are far more than text generators. Multimodal capabilities — handling images, audio, video, and files — have become a key differentiator.

GPT-5.4 offers the most complete multimodal package. It accepts text, images, audio, and video as inputs, and can generate both text and images (via integrated DALL-E). For workflows that involve describing images, transcribing audio, analyzing video frames, and producing visual assets, GPT-5.4 is a one-stop shop. The Pro variant’s processing of multimodal inputs is noticeably more nuanced than Standard.

Gemini 3.1 Pro is natively multimodal in its architecture — it was designed from the ground up to process different modalities simultaneously rather than bolting them on. This gives it an edge in tasks that require understanding relationships between visual and textual information: analyzing presentations, understanding video content in context, or processing complex documents with charts and diagrams. Imagen 3 integration also means solid image generation directly within Gemini.

Claude 4.6 Opus handles images and documents well but doesn’t process audio or video natively, and it cannot generate images. For vision tasks it performs admirably — code screenshot analysis, chart reading, document OCR — but its multimodal scope is narrower than the other two. Anthropic has deliberately prioritized depth over breadth here, and for text-centric and code-centric workflows, the tradeoff makes sense.

Verdict: GPT-5.4 for the broadest multimodal capabilities. Gemini 3.1 Pro for natively integrated multimodal understanding. Claude 4.6 Opus if your work is primarily text and code.

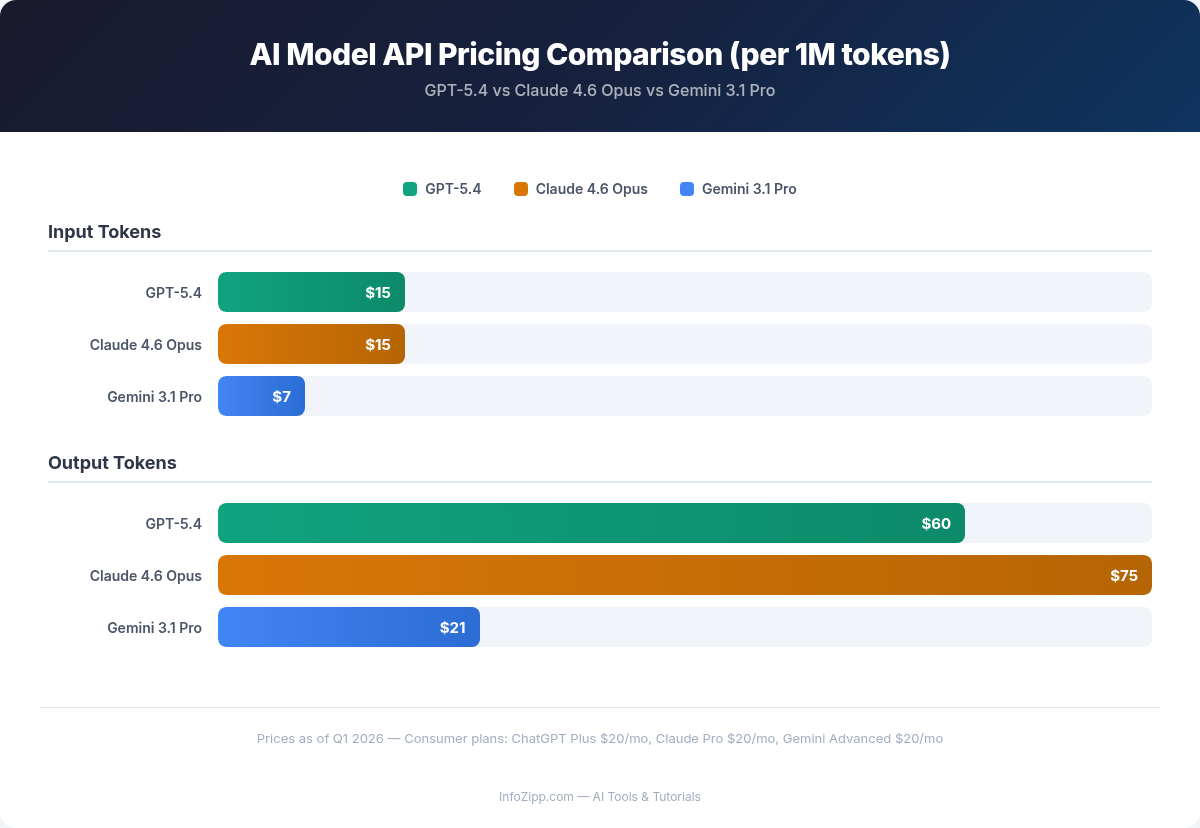

Pricing: Cost vs. Capability Tradeoffs

Pricing in 2026’s AI market has become more nuanced as providers offer tiered models and usage-based plans. The right choice depends heavily on your volume and use case.

For Individual Users (Chat Subscriptions)

All three providers offer a $20/month tier that gives access to their flagship models with usage limits. Google’s Gemini stands out by offering a generous free tier through Google products — if you’re a casual user with a Google account, you may not need to pay at all. OpenAI’s $200/month ChatGPT Pro plan unlocks unlimited GPT-5.4 Pro access, while Anthropic offers Claude Max at $100/month for heavy users who need extended conversations and higher limits.

For Developers and Businesses (API Pricing)

This is where the differences are stark. GPT-5.4 Standard is the cheapest option at $2 per million input tokens — ideal for high-volume applications where you don’t need maximum reasoning power. Gemini 3.1 Pro sits in the middle at $7 per million input tokens, offering strong capability per dollar. Claude 4.6 Opus and GPT-5.4 Pro are premium-priced at $15 per million input tokens, but their output token costs tell the real story: Claude Opus at $75/M output tokens is the most expensive for generation-heavy workloads, while GPT-5.4 Pro is $60/M.

For businesses scaling AI applications, this pricing gap is significant. A customer service chatbot that handles thousands of conversations daily might save 80% on API costs by using GPT-5.4 Standard instead of Claude 4.6 Opus — assuming the quality difference is acceptable for that use case.

Verdict: Gemini 3.1 Pro for best overall value (especially with the free tier). GPT-5.4 Standard for budget-conscious API users. Claude 4.6 Opus and GPT-5.4 Pro are premium options justified by premium performance in specialized tasks.

AI Tools Hub Verdict: Which Model Should You Choose?

After extensive testing across dozens of real-world scenarios, here is our recommendation at AI Tools Hub — broken down by use case, because no single model wins everywhere.

Choose GPT-5.4 if you need…

- Maximum versatility: Three variants let you match capability to task. Use Standard for quick queries, Thinking for analysis, Pro for the hardest problems.

- Broad multimodal support: Images, audio, video, and generation — all in one place.

- Ecosystem integration: GitHub Copilot, Microsoft 365, and the largest third-party plugin ecosystem.

- Cost optimization at scale: GPT-5.4 Standard’s low API pricing makes it ideal for high-volume applications.

Choose Claude 4.6 Opus if you need…

- Best-in-class coding: The #1 developer tool for a reason. Claude Code and the Anthropic API are exceptional for engineering teams.

- Long-document precision: When you need to process hundreds of pages and trust every detail of the output.

- Instruction-following reliability: Complex formatting, multi-step workflows, and strict adherence to guidelines.

- Safety-conscious deployment: Anthropic’s Constitutional AI approach makes Claude a strong choice for regulated industries.

Choose Gemini 3.1 Pro if you need…

- Google Workspace integration: If your team lives in Gmail, Docs, Sheets, and Drive, Gemini is the seamless choice.

- Advanced reasoning: That 77.1% ARC-AGI-2 score translates to real-world problem-solving ability.

- Best value for money: A powerful free tier and competitive API pricing make it the most accessible flagship model.

- Multimodal research: Native handling of text, images, audio, and video with Google Search grounding.

If you’re exploring beyond these three, our roundup of the 10 Best ChatGPT Alternatives in 2026 covers additional options worth considering. And for a look at how the previous generation compared, check our original ChatGPT vs Claude vs Gemini comparison.

The Bottom Line

The honest truth in March 2026 is that all three models are excellent, and the gaps between them are narrower than marketing materials suggest. For most everyday tasks — drafting emails, answering questions, basic research — you’d be well-served by any of them.

The meaningful differences emerge at the edges: Claude 4.6 Opus pulls ahead for serious development work and long-document analysis. GPT-5.4’s tiered approach gives you the most flexibility to balance cost and capability. Gemini 3.1 Pro’s reasoning benchmarks and Google integration make it the smart pick for users already in that ecosystem.

Our strongest recommendation? Don’t lock yourself into one model. The most productive AI users in 2026 maintain access to at least two of these tools and route tasks to whichever model handles them best. The $20/month cost for a secondary subscription pays for itself within hours when you’re using the right tool for the right job.

The AI model race isn’t slowing down — if anything, the pace of improvement is accelerating. We’ll continue to update this comparison as new versions and capabilities emerge throughout 2026.

Alex Kim is an AI tools reviewer and tech writer with over 5 years of experience evaluating SaaS and AI products. He specializes in hands-on comparisons of AI writing assistants, coding tools, and productivity software — testing each tool through real-world workflows before publishing any recommendation. His mission at AI Tools Hub is to help readers cut through the hype and find AI tools that actually deliver results.