When OpenAI launched GPT-5.4 on March 5, 2026, they didn’t just release another incremental update — they fundamentally changed how we think about AI model selection. Instead of one model to rule them all, GPT-5.4 arrived as three distinct variants: Standard, Thinking, and Pro. Each targets a different use case and price point, and the differences between them are far more than cosmetic.

After three weeks of intensive testing across writing, coding, research, creative tasks, and API integration, here’s our comprehensive verdict on whether GPT-5.4 lives up to the hype — and which variant is worth your money.

What’s New: The Three Variants Explained

Previous GPT releases gave you a single model (with occasional “turbo” or “mini” alternatives). GPT-5.4 breaks that mold entirely. Think of it like a car manufacturer offering the same platform in three trims — same engineering DNA, different capabilities and price tags.

GPT-5.4 Standard

The everyday workhorse. Standard is designed for speed and affordability — it responds quickly, handles conversational tasks with ease, and costs a fraction of the premium variants. With a 200K token context window, it’s more than adequate for most interactions. If you’re using ChatGPT Plus ($20/month), this is the model you’ll interact with most frequently.

- Context window: 200,000 tokens

- API pricing: $2 per 1M input tokens / $8 per 1M output tokens

- Speed: Fastest of the three variants

- Best for: General conversations, quick writing tasks, simple coding, brainstorming

GPT-5.4 Thinking

The reasoning specialist. Thinking includes an explicit chain-of-thought reasoning layer that activates for complex problems. You can literally see the model’s reasoning process (when enabled), which makes it easier to verify logic and catch errors. The 512K context window opens up significantly more complex document analysis, and the reasoning boost is noticeable on math, logic, and multi-step planning tasks.

- Context window: 512,000 tokens

- API pricing: $5 per 1M input tokens / $20 per 1M output tokens

- Speed: Moderate (reasoning steps add latency)

- Best for: Complex analysis, math and science, strategic planning, debugging code

GPT-5.4 Pro

The no-compromise flagship. Pro pushes every dial to maximum: the largest context window of any commercial model at 1.05 million tokens, the highest benchmark scores, and the most nuanced outputs. It’s also the most expensive by a wide margin. The ChatGPT Pro subscription ($200/month) provides unlimited access to this variant — a steep price that’s justified for power users who depend on top-tier AI daily.

- Context window: 1,050,000 tokens

- API pricing: $15 per 1M input tokens / $60 per 1M output tokens

- Speed: Slowest (thorough processing of large contexts)

- Best for: Large-scale research, enterprise applications, maximum-quality generation, complex codebases

Writing Performance: How Good Is the Output?

We tested all three variants across multiple writing tasks: blog posts, marketing copy, technical documentation, creative fiction, and email drafts.

Standard handles everyday writing capably. Blog posts come out well-structured and readable. Marketing copy has the right energy. Emails are professional without being stiff. The quality is a clear step up from GPT-4o — sentences feel more natural, and the model is better at maintaining a consistent voice across longer pieces. Where Standard stumbles slightly is on highly nuanced writing tasks: persuasive essays with complex arguments, or creative fiction that requires deep character consistency over many pages.

Thinking adds a noticeable lift for analytical and argumentative writing. When we asked it to produce a competitive analysis report, the output was structured more logically, considered counterarguments, and presented evidence more persuasively than Standard’s version. The reasoning layer doesn’t just help with math — it improves the architecture of written arguments.

Pro produces the highest-quality writing across the board, but the improvement over Thinking is less dramatic than the gap between Standard and Thinking. Where Pro truly shines in writing is on extremely long-form content — its 1.05M context window means it can maintain perfect coherence across 50-page documents, referencing details from early sections late in the piece without losing the thread.

Our take: Standard is sufficient for 80% of writing tasks. Upgrade to Thinking when the quality of reasoning in your writing matters. Reserve Pro for book-length projects or when maximum polish is non-negotiable.

Coding Capabilities: A Developer’s Perspective

GPT-5.4’s coding improvements are substantial, though the story is nuanced across the three variants.

Standard is a reliable coding companion for everyday tasks. It generates correct code for standard patterns, explains concepts well, helps with debugging straightforward issues, and autocompletes functions capably. For junior developers or anyone writing scripts, utilities, and standard web applications, Standard is more than adequate.

Thinking is where GPT-5.4’s coding prowess gets interesting. The reasoning layer translates directly into better debugging — the model traces through code execution step-by-step, catching logical errors that Standard misses. We tested it with a deliberately buggy Python data pipeline (three interconnected bugs across different modules), and Thinking identified all three, while Standard found only the most obvious one. For algorithmic problems and system design, Thinking’s ability to reason through tradeoffs before generating code results in cleaner architectures.

Pro is the beast mode for coding. Its 1.05M token context window means you can feed it an entire medium-sized codebase and ask questions or request modifications with full awareness of the project’s architecture. We tested it by uploading a 400-file TypeScript project and asking it to refactor the authentication system — Pro understood the existing patterns, identified dependencies, and produced a coherent refactoring plan with correct code changes. That said, for raw coding ability, Claude 4.6 Opus remains a formidable competitor (and many developers prefer it — see our Claude Code vs Cursor vs Copilot comparison for details).

Our take: GPT-5.4 Thinking hits the sweet spot for most developers — better reasoning at a reasonable price. Pro is worth it for teams working on large, complex codebases where context window size is the limiting factor.

Research and Analysis: Handling Complex Information

One of GPT-5.4’s biggest selling points is its expanded context windows. We tested each variant’s ability to process, synthesize, and reason about large volumes of information.

Standard’s 200K context window handles most research tasks well. You can upload a 100-page PDF and ask detailed questions about it with reliable results. It falters with extremely specific queries about details buried deep in long documents — sometimes paraphrasing when you need exact quotes, or conflating similar concepts from different sections.

Thinking’s 512K window and reasoning layer improve research quality significantly. The model is better at cross-referencing information from different parts of a document, identifying contradictions, and building structured summaries that capture nuance. For academic research, financial analysis, and legal document review at moderate scale, Thinking is the right choice.

Pro’s 1.05M token context is the largest commercially available, edging out Claude 4.6 Opus (1M) and Gemini 3.1 Pro (1M) by a small margin. In practical testing, the difference between 1M and 1.05M tokens is negligible — what matters is that all three top-tier models can now handle book-length inputs. Pro’s combined context size and reasoning power make it exceptional for tasks like analyzing entire codebases, processing multi-hundred-page legal contracts, or synthesizing research across dozens of papers simultaneously.

OpenAI’s built-in browsing capability adds another dimension. Unlike static document analysis, GPT-5.4 can pull in real-time web data during research sessions — current stock prices, latest news, recent publications — and synthesize it alongside your uploaded materials.

Multimodal Features: Images, Audio, and Video

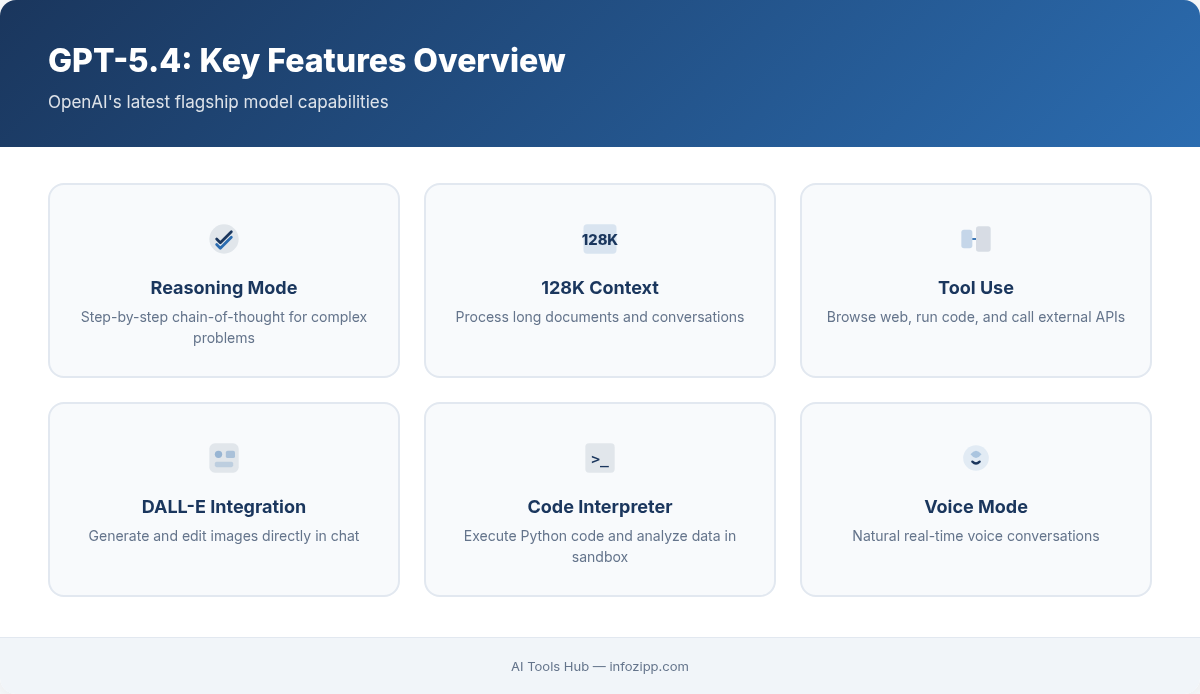

GPT-5.4 continues OpenAI’s multimodal ambitions with support for text, image, audio, and video inputs across all variants, plus image generation via integrated DALL-E.

Image understanding has improved markedly. Standard can accurately describe complex images, read text from photos, analyze charts, and understand spatial relationships. Thinking adds reasoning about images — “this graph shows a declining trend that contradicts the claim in paragraph 3” — which is useful for fact-checking and analysis. Pro processes images with the highest fidelity and can handle large batches of images within its massive context window.

Audio processing now includes real-time transcription, translation, speaker identification, and tone analysis. The quality is competitive with dedicated transcription services, and having it integrated into the same model that processes your text prompts creates seamless workflows — transcribe a meeting, then immediately generate action items and follow-up emails.

Video input (available in Thinking and Pro) allows the model to analyze video frames and audio tracks together. While it doesn’t process video in real-time for extended clips, it handles short videos and key-frame analysis well. Use cases include analyzing product demos, reviewing recorded presentations, and extracting information from tutorial videos.

Image generation through DALL-E integration remains one of GPT-5.4’s unique advantages over Claude 4.6 Opus (which lacks image generation entirely). The quality of generated images continues to improve, with better text rendering, more consistent style adherence, and improved photorealism.

How GPT-5.4 Stacks Up Against the Competition

No review of GPT-5.4 is complete without addressing its two primary competitors: Claude 4.6 Opus and Gemini 3.1 Pro.

| Category | GPT-5.4 Pro | Claude 4.6 Opus | Gemini 3.1 Pro |

|---|---|---|---|

| Writing Quality | Excellent | Excellent | Very Good |

| Coding | Excellent | Best in Class | Very Good |

| Reasoning | Excellent | Excellent | Best in Class |

| Multimodal | Best in Class | Limited | Excellent |

| Value for Money | Moderate | Premium | Best in Class |

The short version: Claude 4.6 Opus remains the top choice for coding and long-document precision. Gemini 3.1 Pro leads on reasoning benchmarks (77.1% on ARC-AGI-2 vs GPT-5.4 Pro’s ~68%) and offers the best value. GPT-5.4 wins on versatility and multimodal breadth.

For the full head-to-head breakdown with detailed benchmarks and pricing analysis, read our complete GPT-5.4 vs Claude 4.6 Opus vs Gemini 3.1 Pro comparison.

AI Tools Hub Verdict: Who Should Use Which Variant?

After extensive testing, here’s our guidance on which GPT-5.4 variant is right for different types of users — because the three-tier approach means there’s no single “right” answer at AI Tools Hub.

GPT-5.4 Standard Is Right For:

- Casual and moderate users who want a reliable AI assistant for daily tasks

- Businesses building chatbots or customer-facing AI where cost per query matters

- Writers and marketers who need fast, good-enough drafts to refine

- Anyone on a budget — at $2/M input tokens, it’s the most affordable premium model available

GPT-5.4 Thinking Is Right For:

- Developers who need better debugging and architectural reasoning

- Analysts and researchers working with moderately complex documents

- Students and academics tackling math, science, and logic problems

- Content creators producing analytical or argumentative writing where reasoning quality matters

GPT-5.4 Pro Is Right For:

- Enterprise teams processing massive documents or entire codebases

- Researchers synthesizing information across dozens of long papers

- Power users who need the absolute best output quality and can justify the premium

- Professionals in regulated fields (legal, medical, financial) where accuracy is paramount

If you’re still exploring which AI tool is right for you, our list of 10 Best ChatGPT Alternatives covers the full landscape. And if you’re brand new to AI assistants, start with our Beginner’s Guide to AI in 2026.

The Bottom Line

GPT-5.4 is genuinely impressive — not because any single variant is the undisputed best AI model (Claude 4.6 Opus arguably outperforms it for coding, Gemini 3.1 Pro beats it on reasoning benchmarks), but because the three-variant approach gives you unprecedented flexibility. For the first time, you can match your AI model to your task without switching providers.

Standard delivers strong performance at low cost. Thinking adds genuine analytical depth at a moderate premium. Pro goes head-to-head with the most capable models in the world and holds its own.

The biggest criticism? The pricing gap between variants is steep. Going from Standard ($2/M input) to Pro ($15/M input) is a 7.5x increase, and output tokens jump even more dramatically ($8/M to $60/M). For API users scaling applications, careful variant selection isn’t just an optimization — it’s a budget survival strategy.

OpenAI has delivered a worthy flagship for 2026. Whether it’s the right model for you depends entirely on what you need it to do — and thanks to the three-variant structure, there’s a GPT-5.4 that fits almost every use case and budget.

Alex Kim is an AI tools reviewer and tech writer with over 5 years of experience evaluating SaaS and AI products. He specializes in hands-on comparisons of AI writing assistants, coding tools, and productivity software — testing each tool through real-world workflows before publishing any recommendation. His mission at AI Tools Hub is to help readers cut through the hype and find AI tools that actually deliver results.